There is a lot of misinformation and general lack of knowledge going around regarding monitors and displays in general. This article will go over the basics about display technology, and elaborate further about popular modern high end display technologies such as variable refresh rate (e.g. VESA AdaptiveSync, FreeSync, G-SYNC), blur reduction (e.g. LightBoost, ULMB, ELMB), HDR, as well as panel technologies such as TN, IPS, VA, “QLED” and OLED.

The Fundamentals

The first thing to know is that display performance is objective like a lot of other computer hardware, making choosing the right display a much easier task for you.

So first, let’s define these performance metrics and specify what the ideal targets are.

Color Gamut, Volume, and Depth

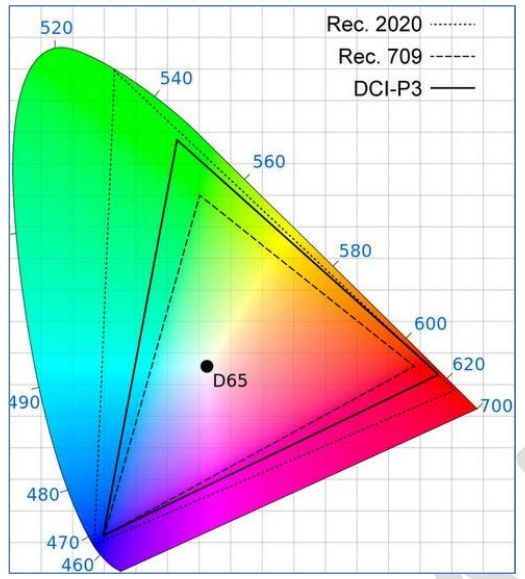

Color Gamut: Also referred to as color space. The amount of colors the display can produce. See the visual below comparing three common color spaces: Rec. 709 aka sRGB (this is what most content, including what 99.9% of video games are rendered in), DCI-P3 (digital movie standard), and Rec. 2020 (Ultra HD standard). HDR has introduced some new color gamuts, such as the ACES gamuts which have the most appeal.

More is not necessarily better. Well, it is and it isn’t: what you want is for your display to have 100% coverage of the color space that the content is made for, whether it’s a game or a movie. That is 100%, no more and no less. Too low means washed out lack of colors, too much means oversaturation. Ideally, a display would have different modes, each covering 100% of the color gamuts it supports, no more and no less. And this is common; for example, displays with >=98% DCI-P3 coverage tend to have a 100% sRGB mode too.

Color Volume: This refers to how many colors a screen can display across a range of brightnesses, not ignoring darker and lighter shades for each color. Or simply the color richness as brightness scales. The higher the better, with 100% being the highest value. Color volume is measured in accordance to a targeted color space, like those in the graph above.

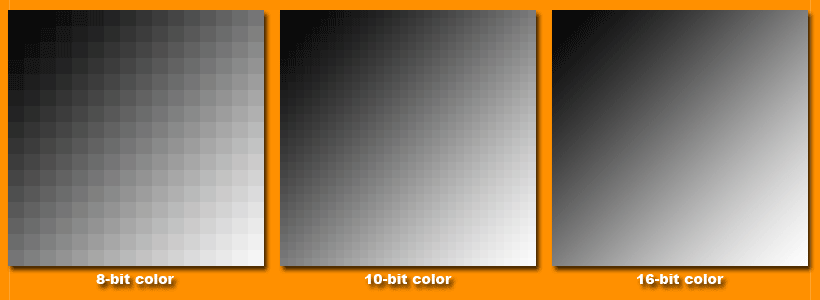

Color Depth: Also known as bit-depth. This is the number of bits used to indicate the color of a single pixel. More is better (although the higher this is, the more display bandwidth is eaten up which you might want to instead use for higher refresh rate for example), as more bits means more tones per channel for every pixel, leading to much more possible color tones (but NOT more colors like gamut/space). In other words, more bit-depth means smoother gradients as illustrated below.

8-bit is the current standard. Only HDR movies/TV might use 10-bit, and nothing else (in the entertainment industry) goes beyond that largely due to interface bandwidth limitations (HDMI and DisplayPort). Read more about color depth here. The image below is one of the most important parts of that article.

Play any game and look up at the sky when it’s a single color. Notice how much banding there is, how bad the gradient is. Higher color depth would solve that. That is one of our biggest limitations/shortcomings in digital graphics right now, especially for entertainment since 8-bit is far too little. Even HDMI 2.1 is not capable of UHD resolution, 120 Hz refresh rate, HDR, and with 10-bit depth.

Static vs Dynamic Contrast Ratios, Color Accuracy, Color Temperature, Grayscale/White Balance, Gamma, and Brightness

Contrast Ratio: The luminance difference between the brightest and darkest color of a screen. This is one of the most important performance metrics for picture quality. Higher = better, with no ceiling.

Static contrast refers to contrast ratio measured on a single static brightness setting, so this is the most important contrast measurement for LCD/LED displays.

Dynamic contrast is the measured contrast ratio with a varying brightness level; the problem here is, LCD/LED displays are lit by back lighting (in this day and age, this means LEDs mounted either on the sides or behind the panel).

Higher end LCD/LED TVs and now monitors have a feature called “local dimming” which adjusts the backlight level based on the content on screen, e.g. it dims in dark scenes and brightens in bright scenes (HDR gives finer control and range of this). As you can imagine, this is terrible on these screens with minimal compartmentalized backlight “dimming zones” as it would just lead to an excessively dim or excessively bright picture, depending on the content.

The band-aid workaround for this is increasing the amount of dimming zones. A 1920 x 1080 display has 2,073,600 pixels, so really it needs 2,073,600 dimming zones while a UHD display needs 4x that which is 8,294,400. But the highest end displays have around 1,000 dimming zones only, so they always exhibit a terrible halo effect in high contrast scenes; bright spots bleeding into darker spots. This makes HDR effectively useless on LCD/LED displays. HDR (i.e. VESA DisplayHDR and Dolby Vision) really need a self-emissive display like OLED to not look terrible.

Don’t roll your eyes. OLED displays have an OLED for every pixel, so yes the 4k OLED TVs actually have 8,847,360 “dimming zones” so to speak. Each pixel will actually dim as on-screen content darkens, as far as turning completely off for true blacks. This means OLED naturally, mathematically has a contrast ratio of infinity (since the peak brightness level is divided by absolute zero), and this contrast ratio is always “dynamic contrast” since it is not a static luminance level since OLED is just too damned good for that.

For LCD, it is most wise to ignore the outrageous dynamic contrast measurements like 1,000,000:1, because dynamic contrast always looks awful on LCD, whether you’re dealing with the $2,000 UHD 120 Hz monitors or Samsung’s best QLED TVs (QLED = quantum dot VA so the liquid crystals are replaced by quantum dots). The haloing always ruins it, especially in HDR content.

Here is a table for average static contrast per display type.

| Panel Type | Common Usage | Typical Static Contrast Ratio |

| TN | Monitors | 800:1 – 1,000:1 |

| IPS | Monitors, Cheapest TVs, Smartphones | 900:1 – 1,300:1 |

| VA | High End Monitors and TVs | 5,000:1 – 7,000:1 for high end TVs, 2,000:1 – 3,000:1 for monitors |

| Plasma | High End 2000s TVs | 3,500:1 – 29,000:1 |

| OLED | High End TVs, High End Laptops, Smartphones | Infinite |

While this image below is overexposed, this shows the difference between true blacks (OLED) and what LCD calls black.

Color Accuracy: Measured in delta E, a standard set by the International Commission on Illumination (CIE). This is a very complex formula that only got more complex over time, the goal being to measure color difference, but the end result is during calibration, the lower the value (down to 0) the more accurate the color. Values over 3 start to get noticeably inaccurate.

Color Temperature: Characteristic of visible light measured in Kelvin. Affects the general color tone of the light emitted by the display. Higher values = cooler (more blue), warmer values = warmer (more orange/red). “Low Blue Light” modes just severely lower color temperature. Ideally, the color temperature should be perfectly neutral so as to not corrupt the image. The neutral value thus the color temperature target is 6500k (white). I recommend ignoring all standard picture modes that don’t have 6500k (D65) whites.

Grayscale/White Balance: Measured in delta E, this is like color accuracy but only for the grayscale (whites, grays, blacks). So again, the ideal value is 0, so lower is better.

Gamma: Brightness of the grayscale. The ideal value for accurate viewing of the grayscale is 2.2, so try to get as close to that as possible for all shades of grey.

Brightness: This is just the general brightness or backlight level (LCD) of the display. Brightness setting impacts the performance in all other areas so it is quite important.

LCD displays are designed to be run at around 120 cd/m2, but the ideal brightness setting depends on your environment, namely the ambient light of the room. And HDR has changed everything; the brighter the HDR peak brightness, the better (but again this doesn’t mean much when you have an LCD panel with blooming/haloing from local dimming).

The display’s brightness needs to be not overpowering so that it doesn’t fatigue your eyes too much. Higher brightness also worsens contrast by making black levels inferior, ESPECIALLY on IPS panels.

My recommendation is to adjust room lighting according to the display’s ideal ~120 cd/m2 target (SDR), NOT the other way around. This means no ambient lighting and only a bias light, which is a 6500k white light on the back of the display with even distribution against the wall behind it, which improves perceived contrast and black levels (seen in the video at the bottom of this article).

Response Time: This essentially refers to how quickly pixels change from one color to another, measured in milliseconds. The faster, the better. Modern displays use overdrive, which is just applying higher voltages to speed up pixel transitions, but this can cause its own artifacting (coronas) which reviewers call overshoot. Here is a table that lists the response time ranges for modern day panel types (with acceptable overshoot levels), looking at higher end gaming monitors and TVs. Of course your generic $100-200 monitors and $300 TVs are nowhere near this fast, and LCD panels have sped up drastically in recent years.

| Panel Type | Average Response Times |

| TN | 1-4 ms |

| IPS | 2-5 ms at 240-360 Hz, 4-6 ms at 120-144 Hz |

| VA | 1-5 ms with up to 25 ms peaks at 240+ Hz, 2-6 ms with up to 40 ms peaks at 120-144 Hz |

| OLED | 0.2-1 ms with a few ~8ms peaks |

So these are the important metrics and the target for each of them. Accuracy is achieved via hardware calibration, using a colorimeter or spectrophotometer. Rarely are displays pre-calibrated, and high end TVs have far better factory calibration than monitors typically. Here is what a detailed calibration report looks like.

Panel Coating

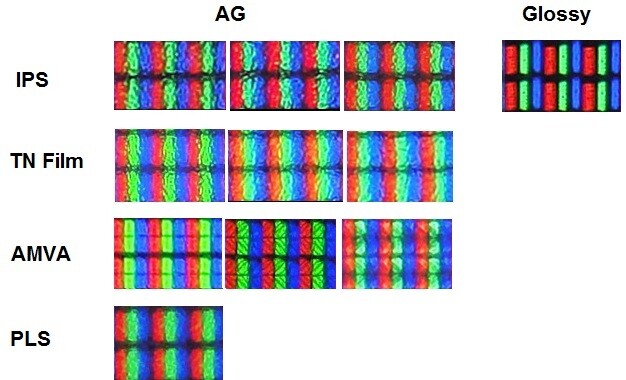

This is something that’s often overlooked with displays. Almost all monitors use really blurry matte coatings that damage visual quality. To see how damaging it really is, look at how unclear these matte coatings are. The images below are from TFTCentral.

Here is their excellent article on the subject. High end TVs use much better coatings that are both glossy and great at stopping glare. Plasma TVs had one of the most amazing coatings ever seen though, demonstrated by this photo below showcasing glossy LCD vs semi-glossy LCD vs plasma.

Ultimately, nasty matte coatings are just one of many factors (the others described above) responsible for bringing monitor image quality far below that of TVs.

Few websites go this far when reviewing displays. For TVs, rtings.com is one of the only ones. For monitors, check out prad.de, tftcentral.co.uk, and pcmonitors.info

Advanced Technologies

Now we will look at advanced technologies like high refresh rate, variable refresh rate (G-SYNC, FreeSync, AdaptiveSync), HDR, and blur reduction via strobing (Lightboost/ULMB and others).

High Refresh Rate

First and foremost I should discuss the advantages of high refresh rate (more than 60 Hz). The default refresh rate (so to speak) of LCD displays is 60 Hz. That’s the standard, that’s what nearly every LCD display is on the market, whether it’s a TV or a monitor, whether it uses a TN, IPS, or VA panel.

Fake High Refresh Rate: Any TV that’s listed as 240 Hz or above is telling you a blatant, stupid lie. Most TVs listed as 120 Hz are lying equally so. Almost every TV is 60 Hz, but this will finally change with HDMI 2.1 (HDMI 2.0 has led to some 120 Hz TVs, mostly but not only from LG and Samsung).

The question is, is there any truth to these lies? If they’re really 60 Hz TVs, then why do manufacturers claim 120 Hz or 240 Hz? All that really means is that those TVs use motion interpolation to convert content to 120 Hz or 240 Hz, but the panel still runs at 60 Hz.

Such interpolation can have positive or negative effects depending on what refresh rate it’s converting to, and depending on the model itself. Both 120 Hz and 240 Hz interpolation should be beneficial for watching TVs or movies because those are shot at 24 FPS, and 24 FPS doesn’t divide evenly into 60 Hz but it does divide into 120 Hz/240 Hz. Thus, interpolation to 120/240 Hz can potentially deliver judder-free movie/TV playback.

But let’s look back at true high refresh rate panels.

Benefits of Higher Refresh Rate:

- Allows the monitor/TV to display higher frame rates. A 60 Hz display can only physically display 60 FPS. A 120 Hz display can display 120 FPS. There used to be so much confusion over this topic, but these days it’s not so bad. Console gamers still like to say “the human eye can’t see more than 60 FPS!” Some even say that we can’t see over 30 FPS. That’s all random, uninformed, uneducated lies. In reality, the average person can easily detect up to around 150 FPS or more; it’s around this area where it becomes harder to tell, and this is where some people can fare better than others (some may easily see the difference between 200 FPS and 144 FPS for example, but someone else might not be able to).

- Reduced eye strain.

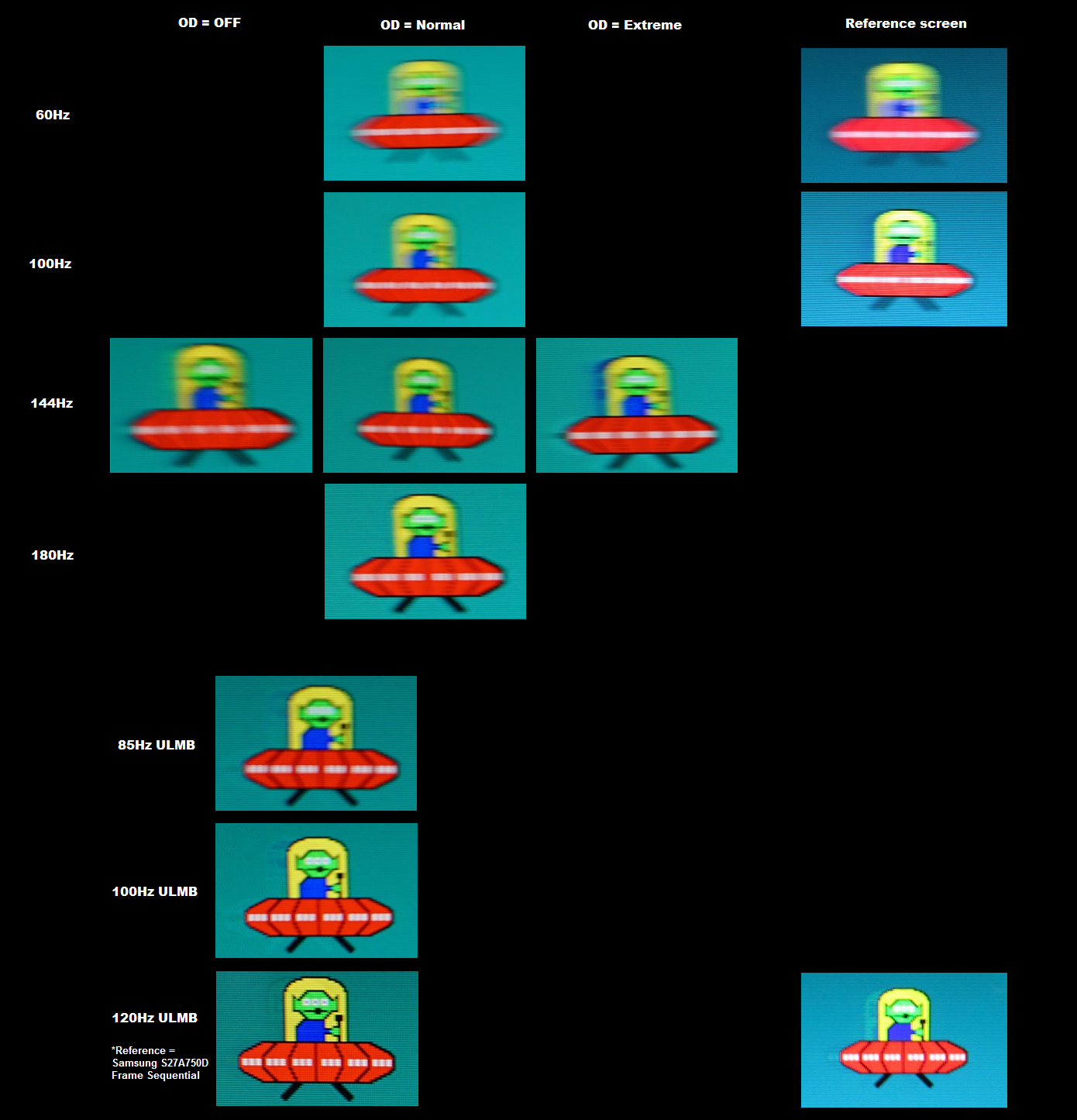

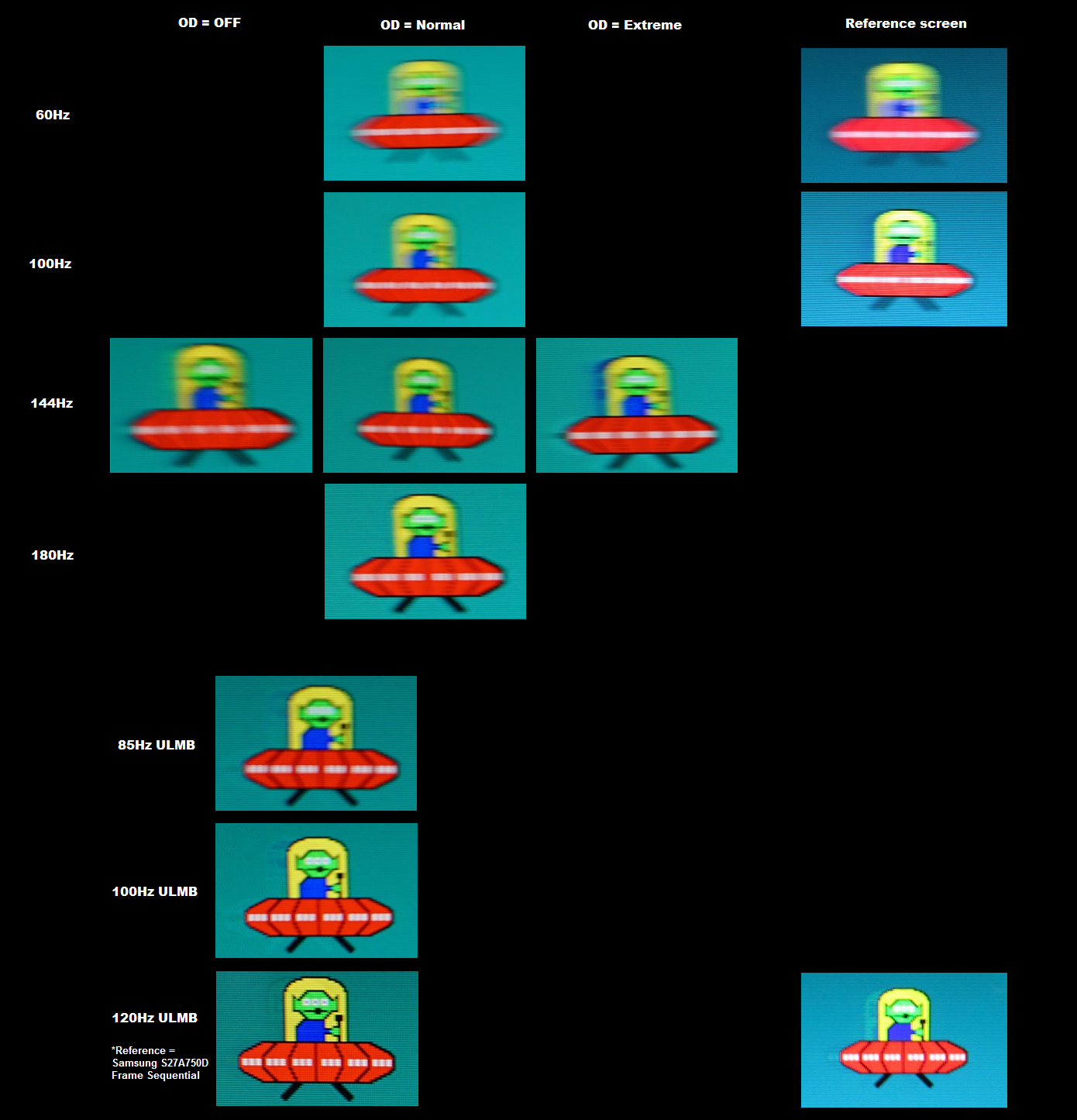

- Lower Response Times – Response time refers to pixel response time. Higher = more motion blurring. Higher refresh rates lead to lower response times, thus less motion blur. See the image below from this TFTCentral review.

This image clearly demonstrates the reduced motion blur from higher refresh rate, which is because it has a much lower response time at the higher refresh rates. They measured an average of 8.5 ms pixel response time at 60 Hz, 6.5 ms response time at 100 Hz, and 5.2 ms response time at 144 Hz.

The advantage doesn’t stop at 144 Hz. Below is an example from PCMonitors who tested the ASUS ROG Swift PG248Q. Look at the 60 Hz vs 100 Hz vs 144 Hz vs 180 Hz comparison. 180 Hz produces a noticeably clearer image than 144 Hz.

What this translates to in practice is, any moving content on the screen will appear less blurry especially when trying to focus on anything in motion.

Disadvantages of High Refresh rate

- Taxes your graphics hard a bit more. Not that this is a big deal at all. Higher refresh rates are a universally good thing.

NVIDIA G-SYNC / AMD FreeSync / VESA AdaptiveSync

These technologies are well established by now, so I’ll go over these before discussing blur reduction.

All of these do the same thing, they’re just implemented differently. AMD FreeSync is actually VESA AdaptiveSync but taken by AMD and given a new name.

But first, what are G-SYNC, FreeSync, and AdaptiveSync, or simply Variable Refresh Rate (VRR – the name for this technology in general)? The answer lies in V-Sync, the technology that has been around forever. It was invented to remove screen tearing.

V-Sync (Vertical Synchronization) – Forces a game/application’s frame rate to match the display’s refresh rate. Alternatively it can be set to 1/2 refresh rate, 1/3 refresh rate, 1/4 refresh rate. All of this is done to remove screen tearing, an annoying issue demonstrated here. Screen tearing occurs when a game’s frame rate does not match the display’s refresh rate, and it’s more common at lower frame rates. V-Sync does remove tearing, but has several flaws which are listed below.

Flaws of V-Sync

- Stutter – If the frame rate can’t match the refresh rate, then many games will switch to V-Sync at half refresh rate instead of full refresh rate (e.g. 30 FPS instead of 60 FPS, which is how most console games operate). But the transition between V-Sync full refresh rate and V-Sync half refresh rate creates stutter. Stutter is demonstrated here.

- Input Lag – V-Sync increases input lag, which can sometimes be felt. Triple buffered V-Sync, which should always be used if you’re going to use V-Sync, minimizes additional input lag compared to double buffered (which consoles use, not 100% sure if the latest consoles are double buffered though). Read this if you want to learn more about buffering. Triple buffering should always be used if using V-Sync, if available. It can add no more than 1 frame of lag (16.67 ms at 60 Hz, 8.34 ms at 120 Hz) as long as V-Sync is active and frame rate is consistent.

NVIDIA invented Adaptive V-Sync, which just disables V-Sync if the game can’t maintain the target frame rate. This solves the stuttering issue, but introduces screen tearing when V-Sync is disabled obviously.

The solution to V-Sync flaws? That’s another thing NVIDIA invented. It’s called G-SYNC. We really have so much to thank NVIDIA for as they either invented or pushed forward some of the best game changing technology in history. G-SYNC is one, as is unified shader processing (introduced with the GeForce 8000 series graphics cards / G80 chipset), mesh shading (invented with Turing and is now a DX12 Ultimate and Vulkan standard), ReSTIR path tracing, micro-meshes, DLSS. LightBoost is another; not an original invention but if it wasn’t for LightBoost we wouldn’t have a bunch of blur reduction monitors today.

NVIDIA G-SYNC – Hardware implementation of variable refresh rate technology. Instead of forcing a game’s frame rate to match the refresh rate (or a fraction of the refresh rate), which is what V-Sync does, G-SYNC works in reverse by syncing the display’s refresh rate to the game’s frame rate.

This removes all screen tearing, without any of the downsides of V-Sync. Input lag? Virtually none is added. The G-SYNC hardware module is also the monitor’s scalar, and it is a very fast scalar with perhaps the lowest input lag ever. The downside is, it has zero resolution scaling capability, so running non-native resolutions via DisplayPort on a G-SYNC monitor will use GPU scaling, which looks awful.

Stuttering? None, and G-SYNC actually reduces microstuttering leading to a smoother game experience in every conceivable way. This test demonstrates microstuttering, it’s basically less severe stuttering. Note that G-SYNC only works on modern NVIDIA graphics cards obviously, and it only works over DisplayPort. G-SYNC Ultimate and G-SYNC Compatible work over HDMI too though.

Here is a G-SYNC simulation demo.

G-SYNC always works right up to the monitor’s maximum refresh rate, and down to about 30 Hz. Below 30 FPS, G-SYNC will switch to frame doubling (aka G-SYNC @ 1/2 refresh rate) down until about 15 FPS. Under 15 FPS, it will switch to frame tripling (aka G-SYNC @ 1/3 refresh rate) until 10 FPS or so (because 10 FPS x 3 = 30 Hz and G-SYNC doesn’t work below this).

A newer version of G-SYNC exists, formerly called G-SYNC HDR but now it’s called G-SYNC Ultimate. It includes tighter standards since it requires HDR and a peak brightness of 1,000 nits.

NVIDIA G-SYNC Compatible: This is NVIDIA’s implementation of VESA AdaptiveSync. This was always inevitable as the open standard was destined to reign supreme here.

Note about using G-SYNC: When enabling G-SYNC, you should just use the default settings that are enabled when simply enabling G-SYNC. These default settings include setting V-Sync to “Force On.” The reason this setting should be used is because it keeps your frame rate from going past your maximum refresh rate—if this were to happen, G-SYNC obviously disables and then screen tearing can return.

Keeping V-Sync set to Force On means V-Sync will enable itself when your frame rate/refresh rate reaches the upper limit. A real life example; on a 144 Hz G-SYNC monitor, using these settings means G-SYNC is enabled as long as the refresh rate is between around 30-143 Hz (10-143 FPS), but at 144 FPS/144 Hz V-Sync takes over to effectively limit the frame rate. A frame rate limiter can be used in conjunction with these settings, to prevent V-Sync from ever turning on: i.e., setting the frame rate limiter to 140 FPS so that the monitor never goes past 140 Hz, keeping G-SYNC always active.

AMD FreeSync – Free implementation of variable refresh rate technology, stemming directly from VESA AdaptiveSync. It does the same thing as G-SYNC, but because of the different implementation (does not require one specific proprietary scalar, although the scalar is still involved in the process just not to the extent of G-SYNC) there are some differences between G-SYNC and FreeSync monitors that use the same panel.

FreeSync has no standards to reach, so early FreeSync monitors have a terrible implementation including non-functional Low Framerate Compensation (LFC). G-SYNC drives up monitor cost by $150-200. FreeSync is supported by AMD, Intel, and now even NVIDIA graphics processors.

FreeSync refresh rate range is more limited than that of G-SYNC, but LFC can make that negligible… if it works. However, at lower frame rates (toward the bottom of the FreeSync range), FreeSync will add more lag than G-SYNC and possibly a less smooth experience, since frames are queued on the G-SYNC module which has its own RAM, thus the GPU doesn’t repeat frames ever with G-SYNC.

It is however possible to have resolution scaling on the monitor side with FreeSync running over DisplayPort. It all depends on the scalar.

AMD FreeSync Premium Pro – While FreeSync had very few standards in place and FreeSync Premium only requires LFC, 1080p or above, and 120 Hz or above, FreeSync Premium Pro (formerly FreeSync 2) has tight standards. While the variable refresh rate range doesn’t have to be as enormous as that of G-SYNC, it is still more tightly regulated and most of all LFC (Low Framerate Compensation) is again mandatory, so that the effective frame rate range for FreeSync 2 goes all the way down to values as low as 10-15 FPS. It also mandates HDR support, intelligent dynamic HDR on/off switching, and more. FreeSync 2 is thus better than G-SYNC, and is equivalent to G-SYNC Ultimate.

Advantages of Variable Refresh Rate

- Removes all screen tearing

- Reduces microstuttering

- No chance of introducing stutter like V-Sync does when failing to hold the target frame rate with V-Sync

- Minimal impact on input lag unlike V-Sync

Disadvantages of Variable Refresh Rate

- G-SYNC significantly adds to the cost of the monitor, as does FreeSync Premium Pro (FreeSync and G-SYNC Compatible do not)

- Generally does not work with blur reduction, ASUS however has a functional implementation of both but it is flawed

- Doesn’t work with NVIDIA DLSS 3’s frame generation component. This is a flaw of DLSS 3 frame generation rather than VRR. DLSS 3 frame gen doesn’t even work with V-Sync, but it’s currently unknown if it works with Fast Sync or not. It doesn’t work with VR either, that tech is currently in its first iteration and it shows.

Fast Sync

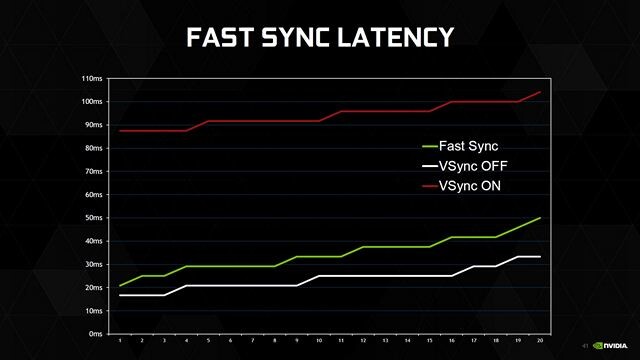

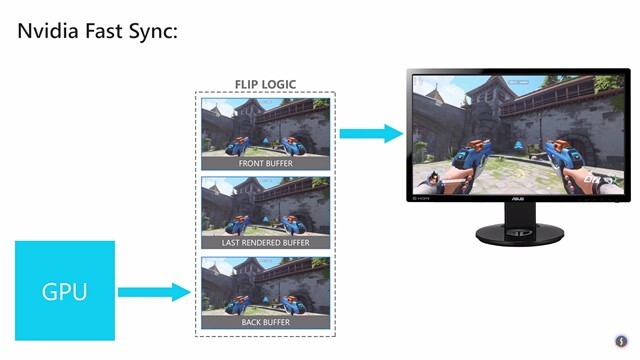

NVIDIA Fast Sync – NVIDIA actually didn’t invent this technology, but they resurrected it. It has nothing to do with variable refresh rate. Here is an in-depth description of Fast Sync:

NVIDIA manages to make this possible by introducing a new additional “Last Rendered Buffer” that sits right between the Front and Back buffer. Initially, the GPU renders a frame into the Back buffer, and then the frame in the Back buffer is immediately moved to the Last Rendered Buffer. After this, the GPU renders the next frame into the back buffer and while this happens, the frame in the last rendered buffer is moved to the front buffer. Now the Last Rendered buffer waits for the next frame, from the back buffer. In the meantime, the Front buffer undergoes the scanning process, and the image is then sent to the monitor. Now, the Last Rendered Buffer sends the frame to the front buffer for scanning and displaying it on the monitor. As a result of this, the game engine is not slowed down as the Back buffer is always available for the GPU to render to, and you don’t experience screen tearing as there’s always a frame store in the front buffer for the scan, thanks to the inclusion of Last Rendered buffer.

In short, Fast Sync is meant to eliminate tearing and introduce negligible amounts of input lag. And it does just that.

However, Fast Sync should only be used when frame rate is always above twice your refresh rate. So if you have a 144 Hz monitor, only use Fast Sync in a game that never drops below 288 FPS. Otherwise, Fast Sync will stutter. But if you can maintain the frame rate target, then the results are superb, especially when combined with blur reduction.

Blur Reduction (e.g. NVIDIA ULMB, LightBoost, ASUS ELMB, etc)

I briefly touched on this before. Pretty much every blur reduction technology, like NVIDIA LightBoost which popularized it, and also NVIDIA ULMB (Ultra Low Motion Blur), BenQ Blur Reduction, Eizo Turbo240, ASUS ELMB, and others, they all work essentially the same way.

First, you need to understand that LCD displays are backlit; the screen is illuminated by lights (LEDs in this day and age) installed either at the edges of the panel (99.9% of computer monitors) or behind the panel (the best TVs, and this is the best method between the two).

These blur reduction technologies remove essentially all perceivable motion blur by strobing the backlight; turning it off while waiting for pixel transactions, and strobing the backlight only on fully refreshed frames. This replaces the sample and hold effect, which non-strobing monitors use.

No more displaying or holding a frame while waiting for the next one as this is what causes most motion blur. Instead, the backlight shuts off in between frames. As you may have guessed, this can cause visible flicker like PWM dimming which can cause headaches or worse, but a high enough refresh rate makes the flicker not noticeable.

The image used above when demonstrating high refresh rates on the ASUS ROG Swift PG248Q is the best example, so we’ll just repeat it.

That 180 Hz photo is the cleanest non-strobing motion image I’ve ever seen. And even ULMB @ 85 Hz beats it. ULMB @ 100 Hz and 120 Hz destroy it!

Blur reduction typically can’t be used at the same time as variable refresh rate, and it also conflicts with HDR. The exceptions are a hack on at least one Lightboost monitor, as well as ASUS “ELMB-Sync” monitors which is blur reduction + variable refresh rate. Why don’t these technologies work together? Because blur reduction affects brightness (greatly reducing it except for Samsung’s monitor implementation and Eizo Turbo240 which only slightly reduce it), and brightness with blur reduction enabled varies depending on the screen refresh rate!

Think about it: the backlight (or pixel light, if we had strobing OLED which we don’t in TVs/monitors) is strobing, turning on and off rapidly. At 120 Hz, this occurs every 8.34ms approximately. At 60 Hz, this occurs every 16.67ms approximately, so the backlight stays on for much longer, significantly altering brightness.

So to properly implement blur reduction and VRR (variable refresh rate) at the same time, brightness compensation needs to happen. This is possible – ELMB-Sync does it, but apparently not perfectly. VR headsets do this.

We also mentioned that blur reduction conflicts with HDR, and this should be obvious now: blur reduction greatly reduces brightness, and HDR is all about short peak bursts of extreme brightness in just certain parts of the display that need it (e.g. a bright sky being far brighter than the rest of the screen, like actual HDR).

Of course, not all content is HDR, so if you have a display that’s capable of both, you could always use blur reduction in SDR content, and turn it off for HDR content. But wait! HDR is mostly useless on LCD displays due to haloing caused by local dimming being perpetually ineffective, and OLEDs don’t have blur reduction, so this HDR-blur reduction incompatibility is not an issue yet in the real world. It’ll be a conflict only if OLED displays one day have blur reduction.

Advantages of Blur Reduction

- Removes all perceivable motion blur. Creates CRT-like motion clarity at 120 Hz and beyond with V-Sync or Fast Sync enabled.

Disadvantages of Blur Reduction

- Flicker, which is more obvious the lower the refresh rate. I don’t think anyone can SEE the flicker when strobing at 120 Hz, but eye fatigue can still occur after lengthy exposure. This depends on the person. At 100 Hz I can see flicker on the desktop but not in games, but eye fatigue occurs after a few hours usually. Below 100 Hz, forget about it since the flicker is blatant everywhere, and I get massive headaches at 85 Hz.

- Reduced maximum brightness. The slower the strobing (e.g. 60 Hz strobing vs 120 Hz), the dimmer. This would make it conflict with HDR. Should only be used in dim/dark rooms. Eizo’s Turbo240 and Samsung’s blur reduction is the only one I know of that can still achieve very high brightness (over 250 cd/m2). BenQ’s blur reduction still allows the monitor to reach around 190 cd/m2 which is bright for SDR (dark rooms should stick to around 120 cd/m2 for reference). 120 Hz ULMB displays can still achieve around 120 cd/m2 but not much higher. Lightboost gets brighter than ULMB. The reason ULMB is so dim is because it doesn’t apply extra voltage to the backlight to compensate for the reduced brightness caused by strobing. Also, higher refresh rate = faster strobing = less brightness loss, hence why Eizo Turbo240 gets so bright (240 Hz strobing + overvolted backlight).

- Strobe crosstalk. A type of ghosting that appears with blur reduction. It’s worse at the bottom of the screen on most models. Easily visible if V-Sync is disabled, and more visible the lower the refresh rate (so it’s extremely visible at 85 Hz for example).

- Building on my last point, most people will only find single strobe blur reduction usable at 100 Hz or above with V-Sync enabled. Also, from my experience only Eizo Turbo240 is effective at lower refresh rates/frame rates.

- Reduced contrast and color accuracy. It’s only awful on LightBoost monitors though. The effect on BenQ, ULMB, ELMB, and Turbo240 displays is minimal. Turbo240 has even less of an impact on these than ULMB.

- Can increase input lag slightly. Seems to be negligible for ULMB, ELMB, and BenQ blur reduction.

Below is a listing of common blur reduction technologies. Note that NVIDIA’s only work over DisplayPort.

- NVIDIA LightBoost – Perhaps the first blur reduction implementation on computer monitors? It’s the first time I heard of it. LightBoost has since been replaced by NVIDIA’s own ULMB, since LightBoost reduces maximum brightness far more, and it degrades contrast and color quality far more. Works at no more than 120 Hz.

- NVIDIA ULMB (Ultra Low Motion Blur) – A fitting name indeed. Like LightBoost, but less impact on peak luminance (although it still GREATLY limits it), and insignificant effect on contrast and color quality. Many ULMB monitors have adjustable pulse width, so ULMB is generally customizable unlike other blur reduction modes! Lower pulse width = lower brightness but less blur, and the opposite is true. ULMB functions at 85 Hz, 100 Hz, and 120 Hz, but not 144 Hz or above sadly. Lower pulse width = shorter strobe length = significantly lower brightness.

- NVIDIA ULMB 2 – much brighter, significantly reduced crosstalk and better motion clarity. The best LCD/LED strobing solution. It includes a special overdrive mode that tries to eliminate crosstalk. To nail home the point about higher brightness, ULMB 2 requires sustained 250 cd/m2. See Blur Busters’ writeup on it.

- Eizo Turbo240 – Seen on the legendary Eizo Foris FG2421 monitor. The first gaming oriented VA monitor ever. Turbo240 utilizes 240 Hz interpolation (internally converts refresh rate to 240 Hz) and then applies backlight strobing at 240 Hz intervals, thus it has the least obvious flicker (the faster the strobing the less visible the flicker) and the most brightness (the FG2421 can still output over 250 cd/m2 with Turbo240 enabled) and it functions as low as 60 Hz, but no matter the setting it interpolates to 240 Hz. I thought the interpolation would be a bad thing, but it doesn’t seem to be. I don’t feel the extra lag although some superhuman Counter-Strike players and the like would. Motion clarity is superior to ULMB on the Acer Predator XB270HU, less ghosting. Even Turbo240 at 60 Hz looks surprisingly good and better than not using it.

- BenQ Blur Reduction – Was perhaps the best in the mid 2010s. Can achieve much more max brightness than LightBoost and ULMB, but much less than Turbo240. Works at 144 Hz, even works down to 60 Hz but I wouldn’t expect this to look good. Minimal impact on contrast and colors. At 144 Hz and 120 Hz the backlight strobing is 1:1 with refresh rate.

- DyAc/DyAc+ – Successor to the above

- Samsung Blur Reduction – This refers to what Samsung uses on their gaming monitors. The entire backlight doesn’t blink at once, it scans in four different areas, thus removing strobe crosstalk! However, it has locked brightness for some reason (and it’s extremely bright at 250+ cd/m2). Single strobes at 100 Hz, 120 Hz, and 144 Hz, double strobes below 100 Hz.

- ASUS ELMB – A pretty basic implementation from ASUS without pulse width adjustment.

- ASUS ELMB-Sync – The only blur reduction + variable refresh rate implementation. I’ve never used it but I’ve read complaints about motion artifacts caused by this mode.

HDR

People always confuse this with software HDR rendering, and I can’t blame them. Display HDR is a modern content format. There are many HDR standards: Dolby Vision, HDR10, HDR10+, VESA DisplayHDR standards, etc. What’s common among them are the following:

- Content is mastered for wider color gamuts, from DCI-P3 to Rec.2020, various ACES gamuts, scRGB, and more

- 10-bit or 12-bit depth

- Improved dynamic contrast which is where the HDR name comes from, better simulating high dynamic range of lighting

The dynamic contrast or the actual HDR effect is only useful on OLED displays however. See the video below for why.

The issue with LCD/LED screens displaying HDR content is that they do not have enough dimming zones; even though the zones don’t turn completely off which reduces blooming/haloing but not nearly enough. It’s still extremely obvious in practice, making HDR content look very distracting and unnatural, with brightness just illuminating darker sections of the screen.

EDIT: As of 2021, only now has someone done full array local dimming (FALD) properly, and it’s Samsung, first with their flagship Q90T but they’ve continued to do it with their flagship 4k (not 8k) “QLED” TVs into 2021. See below.

So what does this mean? Well, if you look at the full review, it means with FALD turned on, the Q90T does 10,000:1 dynamic contrast reliably, and more in HDR content. The QN90A (successor to Q90T) does over 25,000:1 in the same test. This is the new best performing LED in the world, but ultimately it still isn’t enough to dethrone OLED which is why Samsung has moved on to OLED.

Note that only the Q90T and QN90A have usable FALD, thus usable HDR (unless you are okay with just a color gamut increase which most HDR content doesn’t even bring). Even the next best Samsung TV, and all the 8k ones from 2021, have useless FALD.

So what about the HDR content that is out there? The majority of mainstream movies today end up releasing Ultra-HD HDR blu-ray versions with typically very good HDR implementations, and many classics were remastered with surprisingly good HDR. So the HDR scene is big in the movie industry.

As for gaming? It’s a standard in console games since the tail end of the PlayStation 4 and XBOX One era. PC games however get screwed and don’t have it, because developers realize that the vast majority of PC gamers use 1080p 60 Hz LCD displays without HDR although that’s no good reason for disabling HDR on PC versions.

HDR can be forced into games with varying degrees of success. Windows 11 can do this automatically with Auto HDR. A tool called Special K can do this with lots of manual control but it is limited to DX12, DX11 and OpenGL games.

Can a cheap Plasma TV be a good idea for a improvised low budget low input lag gaming monitor? Is power consumption much higher? For the record, energy costs here are quite extreme. Same goes for monitor costs, especially from now onwards due to inflation and shipping supply issues.

Do you think plasma TVs have any particular durability issues? Are regular technicians in 2020 A.D. capable of handling these things?

Great articles here by the way, not just the technical ones. You guys deserve way more viewing than currently.

Thanks for the kind words. I don’t think you’re going to find any low input lag plasma TV’s unfortunately. Low lag TVs seem exclusive to recent, especially higher end designs, going by reviews from places like rtings. Modern LED backlit LCDs should have much lower power consumption than plasma.

Burn-in is also a concern for plasma. Supposedly this was no longer an issue with some of the last produced plasmas from Panasonic and Samsung but I can’t be sure.

Very interesting. I would like to read your opinion on monitors like curve vs flat, panel technologies now (IPS vs TN vs VA).

Also do you have a list of the best gaming monitors?

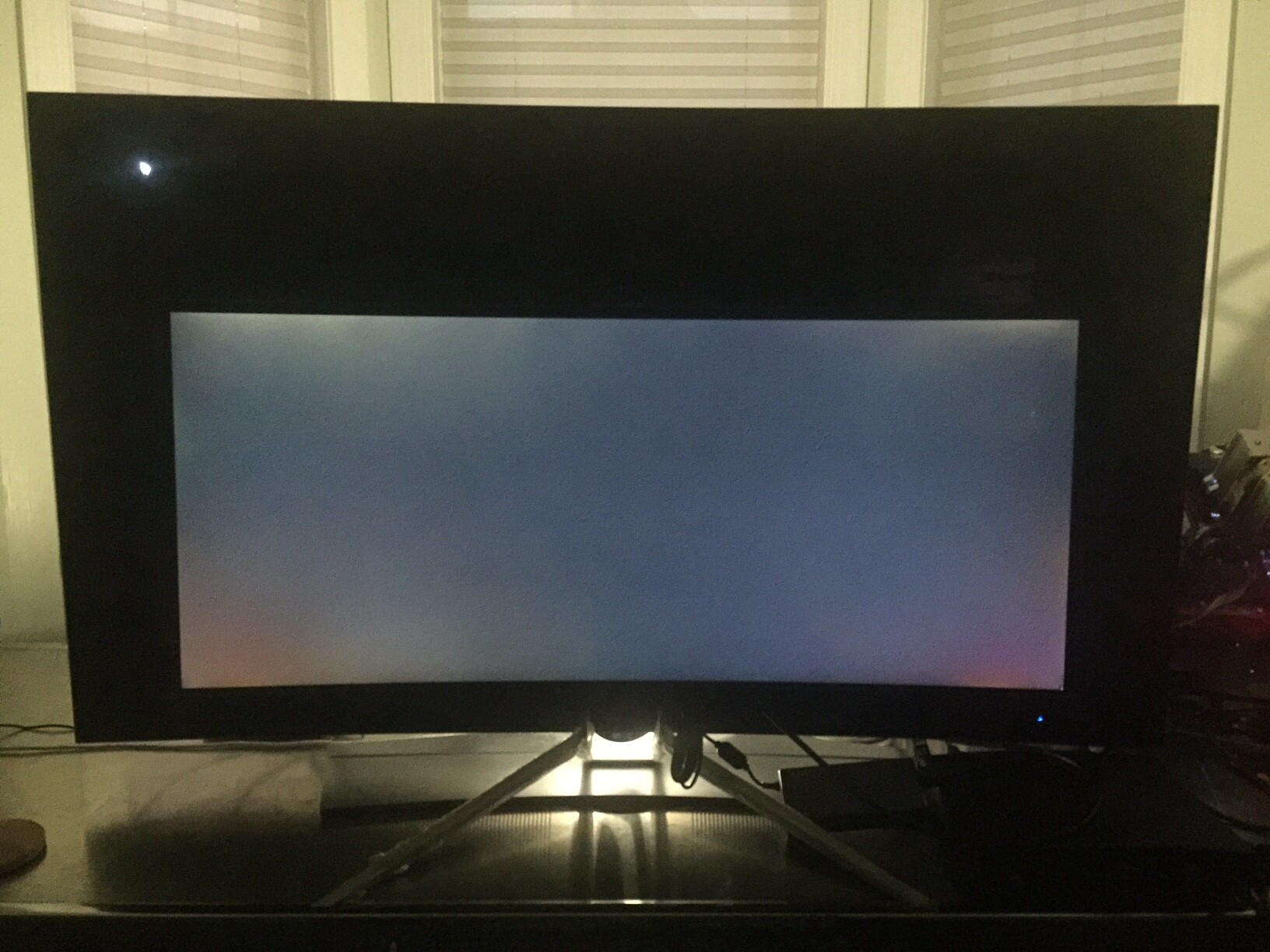

The main reason for curved is to try and preserve viewing angles on wide LCD screens since all types (TN/IPS/VA) can show some shifting (different kinds depending on the panel type) towards the edges especially when talking about 35″. Ultimately I never found it made too big of a difference. I will also warn that ultrawide monitors = much higher chance of backlight bleed. VA, even in computer monitors, still handily beats IPS and of course TN when it comes to static image quality; static contrast that’s usually 3x higher in the monitor world (5-7x higher in the TV… Read more »

Thanks for the advise. To me, I tend to favour curve monitor since I feel a lot more immersive, plus they are much more comfortable to view than flat. But that’s just how I see it. I also tend to like VA monitors too. The contrast really make a difference in picture quality. And the reason why I favor gaming monitors than TVs is because of the price as I feel that gaming monitors are tend to be a lot more affordable. I hope the gaming monitor market will improve with the new technology such as Micro LED,… Read more »