Complacency caused by lack of competition, corporate greed and poor decision making, low market demand due to ignorance, are all just a few reasons why certain computer hardware industries are so behind the times. In this article we will explore which computer hardware industries are lagging behind so badly, and discuss where they could and should be by now. We will be looking at industries relevant to PC gaming, so this is an article for gamers.

Computer Monitors

We talk a lot about monitors and display technology in general here at GND-Tech. Display technology in general is not where it should be, but computer monitors have it the worst. To those without much knowledge in this area, it would be beneficial to first read this article.

Brief history lesson: LCD technology is what totally replaced CRT. This brings up the first issue; this shouldn’t have happened. LCD taking over for lower end markets is fine, but it’s not really suitable for any enthusiast gaming or movie/TV watching. A much better technology was proposed to succeed CRT, and that was FED or Field Emission Display. SED, or Surface-Conduction Electron-Emitter Display, was born of this. Both technologies are far superior to LCD in virtually every way, quite close to OLED in fact with some benefits. They are like CRT taken to another level: Rather than having a single cathode ray tube (CRT), both FED and SED utilize a matrix of tiny CRTs for each individual pixel.

Self-illuminating pixels, which SED/FED and OLED technology offer, is the best type of display. It gets you true blacks, since the pixels will emit no light in black scenarios, thus they also bring much higher (virtually infinite) contrast ratios. Unlike LCD which uses a static edge-mounted or back-mounted light to power the display, making it impossible to properly display blacks and greatly limiting contrast. SED/FED would have been a much more real looking display, more like a window than a screen, also the natural evolution of CRT.

Thus, the benefits over LCD are superior contrast and blacks, perfect luminance uniformity, superior color uniformity, superior color accuracy (better than OLED in this regard as well), faster response times (1 ms with no overdrive artifacts), and apparently greater power efficiency as well. Downsides may have been less potential peak brightness and temporary image retention on static images that require counter measures to avoid.

A prototype Canon SED TV in 2006.

Many enthusiasts are strong advocates for OLED, but had FED and/or SED taken over instead of LCD, even if not completely, (starting from high end market and eventually working its way down as OLED is), we wouldn’t be extremely excited for OLED.

So why didn’t FED or SED take over? The main reason is cost. It was and would have remained more expensive to produce than LCD. The only reason OLED has a chance of eventually succeeding LCD is not because it’s significantly better in essentially every way, but because it may eventually be cheaper and simpler to produce.

But FED and SED weren’t the only panel technologies superior to LCD. Remember Plasma? Not suitable for monitors as they had to be large, but much better than LCD for TV. There was of course misconception about Plasma, mainly regarding “burn-in” but by the end of its lifespan only temporary image retention from static images was present and the TVs had features to clear it. The benefits of Plasma were CRT-like greatly superior motion clarity/response times (some of them refreshed their phosphors at 600 Hz which didn’t create input lag like modern motion interpolation), and much better image quality with deeper blacks and contrast ratios potentailly exceeding 20,000:1.

But again, LCD was cheaper to make and more economical/efficient than Plasma, so Plasma bit the dust. Irrelevant for computer monitors but this kept the TV industry lagging behind where it should be.

OLED isn’t a very new technology. It’s taking way too long for it to be introduced to the computer monitor industry. LG has an OLED TV lineup and Panasonic released one OLED TV using an LG panel, that’s it. The industry should have pushed harder for OLED, not drag out its release.

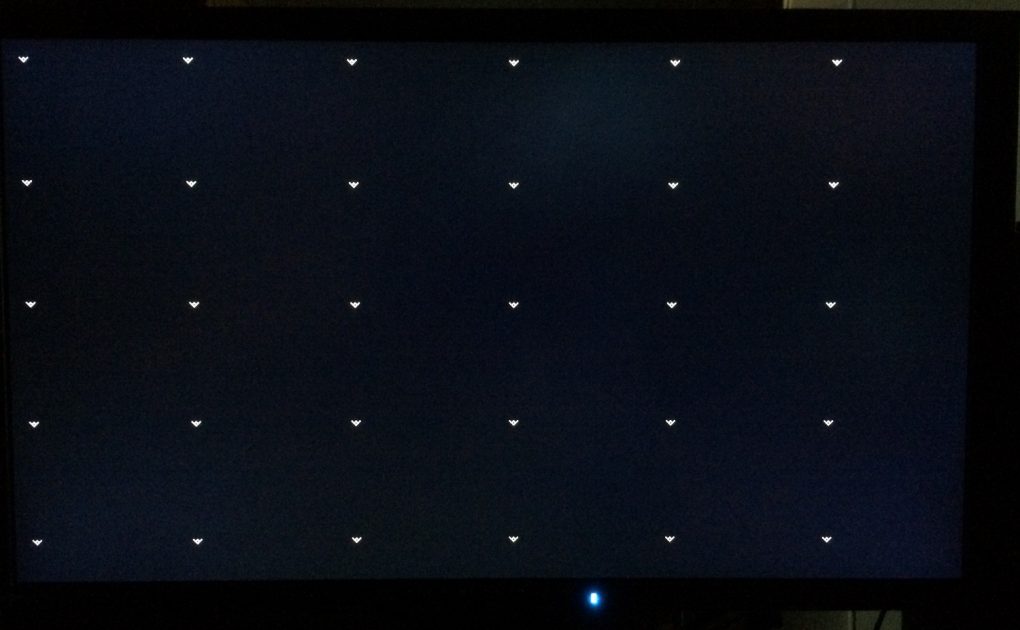

But even LCD technology in computer monitors is, for the most part, years behind that of the high end TV industry. The “best gaming monitors” of today, such as all high refresh rate models, are all using edge-lit backlighting while very high end TVs are using full array backlighting (the LEDs are mounted behind the panel rather than on the edges). Edge-mounted lighting is greatly inferior as it produces heavily flawed and inconsistent brightness uniformity across the screen and has backlight bleeding issues (light “spilling” onto the screen). But even edge-lit TVs have far less bleeding and uniformity issues because they have superior QC.

A defective Eizo Foris FG2421 and the horrifying backlight bleed it had.

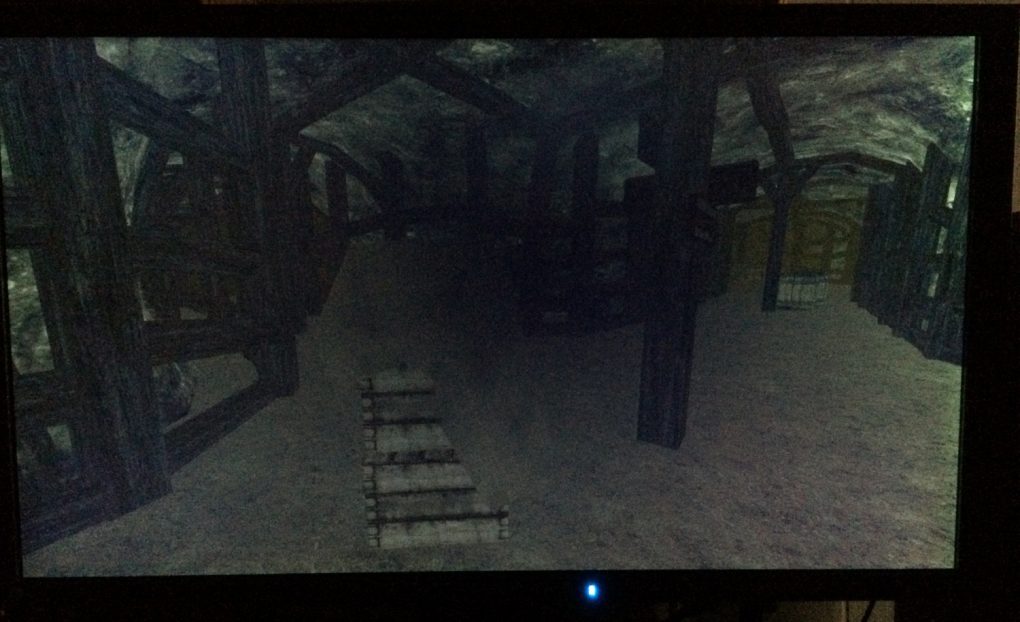

The same FG2421, showing the effects in game.

Where are the full array gaming monitors? But that’s not the only deficiency in gaming monitors, not even the biggest one. The biggest problem with LCD computer monitors, aside from QC, is that high end ones are only using TN or IPS panels. TN is the ideal LCD for super high refresh rate competitive gaming monitors, like the ASUS 180 Hz and 240 Hz monitors, since image quality is almost irrelevant there. But IPS is a useless waste of time at least without full array local dimming with hundreds of dimming zones, especially the rather poor IPS panels used by IPS gaming monitors (144 Hz models especially). These IPS monitors set new records for highest amount of IPS glow ever, it ruins image quality rather than enhances it compared to TN.

So IPS without extreme local dimming has no real use in gaming monitors. It’s meant to look better than TN but it looks worse in dark scenes, and the difference is negligible elsewhere over the 1440p 144 Hz TN monitors. Both have only around 1000:1 static contrast, viewing angles don’t matter that much (as long as they’re good enough for head-on viewing), color accuracy is very similar at least after calibration.

For immersive gaming, VA is the temporary solution until OLED finally makes an appearance, unless the upcoming 4k 144 Hz IPS monitors with 384 dimming zones prove better. But VA monitors have been so problematic, often having peaks of 40 ms or more response times leading to some nasty trailing/streaking behind moving objects in certain color transitions. If not this, then they often have excessive overdrive leading to inverse ghosting. They tend to have only 2500:1 to 3000:1 static contrast, a big improvement over the 1000:1 from IPS and TN but when the average $700+ TV has 5000:1 contrast today, and higher end ones might have 7000:1, it is upsetting.

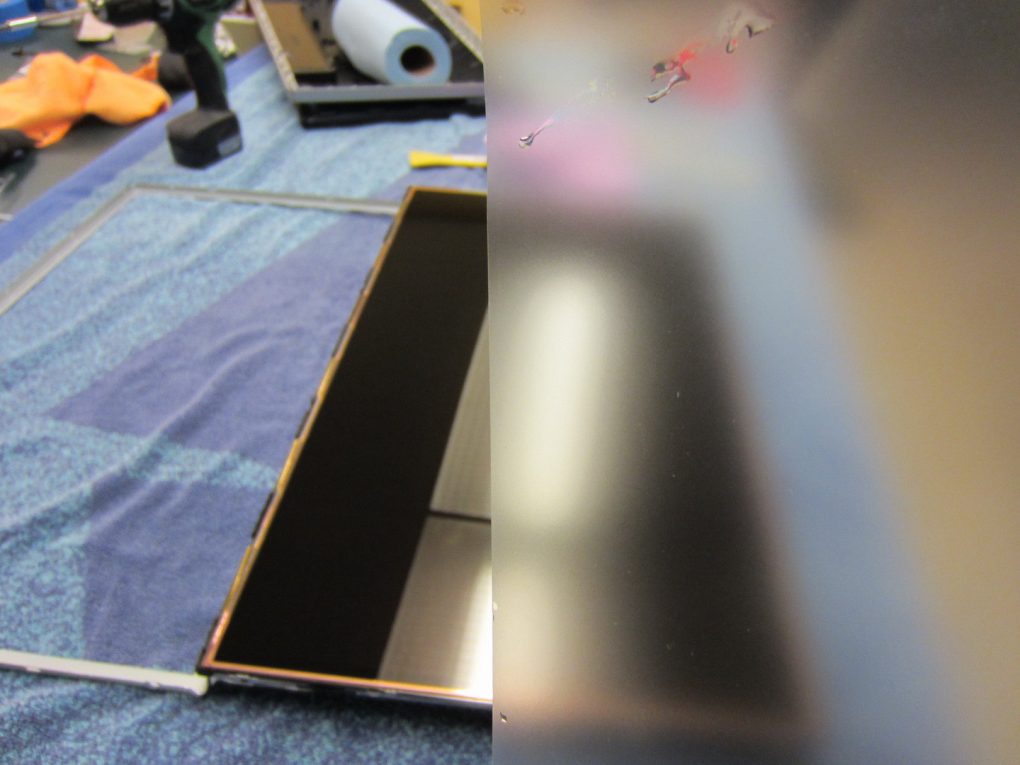

Another issue is, why do TVs get less intrusive glossy coatings or better, while monitors always get blurry damaging AG coatings? See this picture below for reference.

Before you mention glare and reflection issues, higher end TVs (in $700 and above price range) have the best of both worlds with their anti-glare glossy coatings. AR treated glass is used on some very high end models, like the LG E6 and upcoming E7.

To illustrate the differences between the best gaming monitors, the aforementioned Samsung high refresh rate VA, and very high end VA TVs, see the table below. OLED isn’t even included since that’s unfair for LCD.

There is a consistent pattern here. The TVs are designed around picture quality where they are lightyears ahead of any monitor, while the monitors are designed more around high refresh rate fluidity. The fact that the TVs for some reason still use PWM backlighting highlights this the most, since the TVs actually have good response times (as do the monitors). Note that the backlight isn’t visibly flickery on the TVs, but it harms motion clarity somewhat.

But as of 2017, there is some hope. The ASUS ROG Swift PG27UQ, Acer Predator XB272-HDR, and an AOC 32″ equivalent have been announced. These are 27″ (32″ for the AOC) 3840 x 2160 144 Hz monitors via DisplayPort 1.4 (so DSC “lossless compression” is used), combining an AU Optronics AHVA (IPS) panel with full array local dimming and 384 dimming zones. Furthermore they are designed around DCI-P3 color space, which has 25% more colors than sRGB. While this will only oversaturate sRGB content like video games, the oversaturation is not grotesque and for all we know these monitors will have a good sRGB emulation mode. These monitors are also equipped with quantum dot technology, peak 1000 nits brightness (800 nits for the AOC), HDR support, and G-SYNC. This may surpass VA, although the lingering issue is the haloing or blooming effect caused by 384 dimming zones being insufficient (per-pixel dimming is ideal, which equates to 8,294,400 ‘dimming zones’ like a 4k OLED screen). The halo/bloom effect will be worse (stronger) on these IPS monitors compared to a VA screen with the same amount of dimming zones, because IPS subpixels are worse than VA for controlling light (hence why VA static contrast is anywhere between 2.5x and 7x as good as IPS depending on the exact panel).

To summarize all of this, computer monitors are very far behind TVs, and TVs are behind too. LCD should ideally be a dead technology by now. The VA LCD panels used by TVs are overall far nicer than what gaming monitors use, although gaming monitors do have as good motion clarity as one can expect from the fundamentally flawed LCD technology (although there is still lots of room for improvement when looking past LCD). Our only hope is an OLED takeover.

Sound Cards

On the last page we discussed the display industry. Now we will discuss computer audio, namely sound cards. As of 2017 and for the last… many years, there are only two options when it comes to sound cards: Creative and ASUS. But first, another brief history lesson.

The late 1990s to mid 2000s was the era where sound cards really mattered. Those were the days of hardware accelerated sound, when PC games actually used the sound card’s processor and delivered superior effects that we no longer have today. Only Creative’s X-Fi processor provided this, so ASUS sound cards were quite useless beyond the general sound quality improvements over onboard which Creative also brings. Furthermore, Creative did license their X-Fi processors and let other companies, such as Auzentech, use it.

Left: Creative Sound Blaster X-Fi Titanium HD, the highest quality X-Fi sound card ever made and easily on par with today’s flagships. Right: Creative Sound Blaster X-Fi Titanium Fatal1ty Pro, the highest quality 7.1 capable X-Fi sound card ever made.

The days of glorious superior hardware accelerated video game sound effects was a three part effort, requiring input from the game developers (to make their games use it), Microsoft’s DirectSound3D audio API (was standard issue back then just like how DirectX graphics API is and was), and Creative’s X-Fi processor. Nowadays, both DirectSound3D and X-Fi are dead. DirectSound3D had an open source successor called OpenAL, which is no longer entirely open source although OpenAL Soft is an open source software-only implementation that remains.

But X-Fi processors are dead and modern sound card processors, including Creative’s latest Sound Core3D (and of course all ASUS solutions) only process OpenAL instructions via software. As for DirectSound3D, since it does not exist in Windows Vista and later Windows operating systems, a program must be used to convert DirectSound3D to OpenAL so that sound cards can process the game’s DirectSound3D instructions. The only program that does that is Creative ALchemy, which is proprietary and only works with Creative sound cards.

Long story short, for modern games sound cards are unnecessary. Want to improve sound quality in general with your speakers/headphones? Assuming the speakers/headphones aren’t crap (which they probably are), get an external DAC (and amplifier for headphones) which is better.

Today’s sound cards lack hardware accelerated sound support, but so do the games. Sound cards are lacking in very obvious ways too; Creative’s sound cards only support up to 5.1 surround these days, while a few previous ones (like the above pictured X-Fi Titanium Fatal1ty Pro) support up to 7.1. We’re not even speaking strictly of analog connections (hardly anyone uses analog surround with sound cards anyway), but DSP (processor) support. In Windows, with a modern Creative sound card you can’t select more than 5.1.

Then there’s the output connections offered by sound cards. No HDMI for starters. So for those of us using surround systems, we have to either use a surround system with active speakers connected to the sound card’s analog outputs, using the sound card’s DAC. Two major problems with this: There are no active center channel or multidirectional surround speakers on the market, to my knowledge, so you’d be using bookshelf speakers all around (turn one sideways for the center channel I guess). The other more blatant problem is that only five sound cards have respectable enough DAC quality for this use: ASUS Xonar Essence ST, ASUS Xonar Essence STX, ASUS Xonar Essence STX II, Creative Sound Blaster X-Fi Titanium HD, Creative Sound Blaster ZxR. They’re expensive and are still much worse than practically any A/V receiver.

Creative Sound Blaster ZxR, Creative’s latest flagship sound card. Essentially the same as the Titanium HD but no actual hardware acceleration support, a headphone amplifier is included, and better shielding. Still uses a DSP with only 5.1 support. Still no HDMI output.

Ah, but an A/V receiver! How about passing a digital signal to that, bypassing the sound card’s analog section and using the receiver’s superior DAC as well as actual home theater passive speakers, including center channel and multidirectional surround channels? That’s what almost every surround sound PC gamer uses, it’s the best option. The problem here is the lack of HDMI output. HDMI can carry an uncompressed 7.1 sound signal. Since sound cards (except maybe one I think?) don’t have HDMI, we instead have to use S/PDIF out (almost always via optical TOSLINK rather than coaxial, and on that note coaxial is of course slightly better…). S/PDIF can only carry a compressed 5.1 signal via encoders like DTS Connect and Dolby Digital.

The compression with DTS Connect/DTS Interactive isn’t horribly offensive. Games don’t use many high quality/bandwidth sounds and many are computer generated sounds anyway. Still, better 7.1 support is nice as is uncompressed sound via HDMI especially for HTPCs. It’s still possible to use uncompressed surround via HDMI using a video card or onboard graphics HDMI connector, but some may want to use various sound card features which onboard wouldn’t provide, not to mention OpenAL/DirectSound3D/EAX games need a Creative X-Fi sound card to sound their best.

Sound cards can be of some use to audiophiles, at least if they had other digital outputs than just optical TOSLINK. They need to offer coaxial (RCA jack), HDMI 2.0, USB, and RJ45. It’s better than connecting to a DAC or digital interface from onboard. Better SNR, and PCI-Express bus is less busy and cleaner than USB. They can be useful audio interfaces if they have a robust input section as well; Creative’s Titanium HD and ZxR have the ADC quality (analog to digital converter) but again lack the connectivity to be particularly useful as digital interfaces for many people.

In conclusion, we need games and sound cards to once again have hardware acceleration support. Bring back X-Fi, rename it if you want. Make OpenAL more appealing to game studios, don’t let these studios be attracted to Wwise, XAudio2, FMOD, or anything else. Furthermore, 7.1 support needs to be standard, and so does the inclusion of HDMI output. Other digital outputs and inputs would be nice too for maximum connectivity, flexibility, and usefulness, perhaps through a daughterboard card. But there is absolutely no sign of the sound card industry digging itself out of its grave and catching up with the times.

Keyboards

As far as keyboards go, there are mechanical keyboards and then there’s everything else. For those not very knowledgeable about mechanical keyboards (and thus keyboards in general), give this a read.

So how are keyboards behind? At least as of late 2016 we have seen mechanical keyboards creep down into more affordable price ranges (around $60), offering quality that non-mechanical keyboards can’t even dream of. But that’s not enough. First and foremost, more keyboards need a lower profile chassis design to make cleaning much easier, like the keyboard pictured at the top of this page (a custom model from GON’s keyboard works) or like Corsair’s models. Furthermore, we need more design variety with mechanical keyboards. Many people like low profile laptop-style keycaps, so some mechanical keyboards (affordable ones too) should come with those by default, just to open up to wider audiences.

People also like silent typing, so o-rings (rubber o-rings installed inside the keycap to absorb sound and soften impact) should be standard on “silent” models, which is a niche that needs to be created in the mechanical keyboard market.

But let’s get to the good stuff, the real technological revolution since everything above can be done already just by buying aftermarket keycaps and o-rings. Analog mechanical switches, utilizing IR LEDs to create what is essentially a pressure sensitive switch, without relying on capacitive membranes like standard keyboards or controller buttons. A far more reliable and responsive alternative still providing greater functionality. Refer to the proposed design below from Aimpad.

Such a switch would feel no different than recognizable Cherry mechanical switches, but would provide benefits for gaming thanks to greater functionality. An example of such an improvement would be, in a game hold the W key lightly to walk, and hold it all the way to run. The same would apply to all player movements, also in vehicles. And before anyone says it, no, the key press is not longer with such switches. These analog mechanical switches have three activation points across the standard length key press.

Other games would come up with their own use of such keyboard switches. Most games that have no separate walk key would no longer suffer, as these switches would enable different levels of movement like walking and running.

Aimpad never reached its Kickstarter goal and other variations of analog mechanical switches never made it beyond the prototype stage. At least RGB LED backlighting is becoming a standard, and at least mechanical keyboard prices are continuously trickling down, but the keyboard industry is still at a crawl. Although it is not as stuck in the mud as game controllers of course.

NVIDIA Graphics Cards

No, we’re not AMD fanboys, but NVIDIA needs to get with the times as their Vulkan and DX12 performance shows. To put it simply, Vulkan and DX12 are designed around parallel processing, which is far more efficient. AMD’s GCN architecture is designed this way. NVIDIA’s GeForce architecture is not, it is sequential and linear thus limiting for games. Vulkan and DX12 can have some stupidly good performance and/or gameplay improvements, but for that we need parallel GPU architecture to be the standard. Someone wake NVIDIA up.

NVIDIA is no stranger to parallel computing and compute performance. See their Quadro cards or even past GeForce like Fermi. Volta should be what we need, but NVIDIA is clearly waiting until the last minute, encouraging the use of primitive and very limiting graphics APIs until then, and slowing down engine development (e.g., Epic Games is too scared to take the next step in Vulkan and DX12 implementation with Unreal Engine 4 since they don’t want to leave NVIDIA behind).

Vulkan/DX12 can lower CPU bottlenecks, driver overhead, have epic multi-GPU scaling (including using both NVIDIA and AMD GPUs together), and of course the draw call potential can lead to epic gameplay improvements. In a few years, when Vulkan and DX12 take over as primary graphics API, we will see some very nice improvements in games. It’s a shame it can’t happen now on a large scale.

This brings up another aspect, and something not as specific to NVIDIA: Multi-GPU technologies, like SLI and CrossFire. These technologies have advanced poorly and NVIDIA is actually regressing and limiting their cards to 2-way SLI only. With the potential Vulkan and DX12 have for multi-GPU improvements, everyone including both NVIDIA and AMD and also game studios should be supporting it.

On a side note, NVIDIA’s continued push for exclusivity and proprietary technologies is only limiting themselves and damaging the gaming industry. Volta and future NVIDIA GPUs will be more capable in OpenCL. By that time, NVIDIA PhysX should be ported to OpenCL and not be limited to NVIDIA GPUs. Physics are still in the stone age, with 2004’s Half-Life 2 having more realistic and simply more physics than most modern games. PhysX is incredibly realistic in all areas, and can bring massive performance and gameplay improvements if it wasn’t limited to CPUs and NVIDIA GPUs.

Thankfully, PhysX has since been ported to DirectCompute, showing performance improvements over CUDA even (which is bizarre). Hopefully OpenCL is next, and hopefully we see the growth in game physics that the industry so desperately needs.

As a side note, it would be nice if high quality shrouds using neutral colors and RGB LEDs, such as this EVGA GTX 1080 FTW, would become a standard design for high end GPUs so that they can match with any PC.

But NVIDIA’s exclusivity won’t change any time soon. At least their GPU architecture will presumably cease being so terribly outdated and limiting in 2018 with the release of Volta. Of all the hardware industries we have talked about in this article, this one has the most promise of catching up.

RAM, CPUs, Motherboards

Here, we are speaking of both Intel and AMD platforms. Due to Intel’s significant lead over AMD between 2009 and 2016 (in the desktop environment), they have gotten extremely complacent. DDR4 RAM is new to desktop computers, while GPUs have had GDDR5 (quad data rate) for years and some now use GDDR5X (faster GDDR5) or even 3D stacked memory (HBM, AMD R9 Nano/Fury/Fury-X)? This is one of the things that triggers us the most. If the industry desired, there could have been DDR5 RAM already in desktop computers, and perhaps HBM 2 on Skylake-X and Kaby Lake-X.

Then there is the very slow, incremental improvements Intel has been showing with price increases as well, but AMD was to blame here as they were not able to keep up. This has led to Intel limiting their own processors and gimping them more than ever. They are limiting overclocking more than ever, and have reduced themselves to no longer soldering the IHS (integrated heat spreader) onto their more mainstream CPUs, using cheap thermal glue instead, leading to temperature limited overclocking. Those that want to avoid this will have to pay more for their highest end X platform (Broadwell-E currently) where they still solder the IHS to the CPU die which leads to much better contact and thus temperatures, the process that used to be standard across all of their CPUs. But then Broadwell-E has inferior clock speeds and IPC performance to Skylake/Kaby Lake, causing the latter to still be superior for the vast majority of video games. We are all caught between a rock and a hard place.

They also limit the i7 5820k and i7 6800k (last and current gen lowest end desktop X99 processors, $389 and $434 MSRP respectively) to 28 PCI-E lanes, still not enough to run two GPUs at full x16 bandwidth. If you want to use two GPUs, you better spend $600 on their higher end processor with more PCI-E lanes! Spending more just for X99 isn’t enough, you have to get at least their $600 processor as well, since a few of today’s cards and many future ones will strongly benefit from full PCI-E 3.0 x 16 bandwidth.

Our only hope here is strong competition from AMD. We have Ryzen, and while Ryzen 5 succeeds at decimating the Core i3 lineup (something Intel did themselves) and shutting down most of the Core i5 lineup (especially if you include long term usefulness), Ryzen 7 still loses handily in games to Skylake/Kaby Lake and Broadwell-E. This can be remedied with BIOS updates, OS updates, and game updates, but Ryzen’s limited clock speeds and overclocking and memory speed will remain problematic until a refresh. A refreshed Ryzen though, that doesn’t skimp on gaming performance (since the issue is not in Ryzen cores themselves), will be just what we need.

[…] we wrote about computer hardware industries stuck in the stone age. That article examines hardware industries relevant to PC gaming. Today, we will look at the […]