A game is nothing but an idea without an engine, and in today’s gaming industry there are many options when it comes to game engines, physics engines, audio APIs, and more. When cost and royalties are factored in, the choice may become difficult. But in this article we are putting that aside and focusing purely on video game technology, and believe it or not, for the most part it is quite clear which technologies have the most potential.

By game technology, as you may have guessed we are largely referring to game engines, but also physics engines, graphics APIs, and audio APIs. The fact of the matter is, in a modern gaming PCs the vast majority of our CPUs are idling during games, only the GPU is really used intensively and even then, many of its best features are completely ignored. Game/game engine programming is stuck in the 2000s, and in this article we’ll look at how this should change.

The Engine

Several engines are worthy of respect, and many are not. Far too many studios stick to their own inferior, outdated, and terrible engines just because of familiarity. Licensing costs isn’t a valid excuse anymore since there are fantastic options with none, but we’ll get to that later. Bethesda Game Studios and Bohemia Interactive are perhaps most guilty of sticking to awfully outdated engines because their games are in need of a new engine more than most others.

Unreal Engine 5 is the latest and greatest version of Unreal Engine, set to release in 2021. Unreal Engine 4 revolutionized game development to an incredible degree, and UE5 will be doing the same. At a fundamental level, the engine is incredibly modular and extensible, so streamlined with so many integrations, no other engine allows you to make so many different types of games on so many different platforms in such an intuitive manner.

But UE5 goes further than that. Currently, its biggest new features are its virtual geometry system (Nanite) and real time global illumination system (Lumen) as shown in the video above. Being a continuation of Unreal Engine means it will also have features like Metahuman and Chaos Destruction and more shown below.

Then there’s the fact that various independent developers make their own (sometimes free) tools and plugins for Unreal Engine, and some become part of the main branch.

How about Infinity Weather?

How about FluidNinja LIVE?

How about UnrealWild? AI processed satellite imagery to Unreal Engine worlds with ease? Plus full terrain deformation? This isn’t out yet, but it has been in progress on Unreal Engine 4. The creators are likely waiting for UE5 and its tile-based rendering and general optimization improvements.

Sure, Chaos Physics and Infinity Weather and FluidNinja can be surpassed by PhysX integrations (especially if PhysX were to be ported to OpenCL with both GPU and multithreaded CPU utilization – the ultimate solution), but the fact that these exist in Unreal as plugins makes it curious why no one is using them. Curious and disappointing.

The performance, scalability, visual quality, and versatility of Unreal Engine 5 makes it such an obvious choice for most types of games. We really need to see games use it and use its best features intelligently. However, the licensing will probably be the same as that of Unreal Engine 4 – for any game that makes over $1 million, Epic Games will collect 5% royalties (except for games using Unreal Engine 4 and presumably Unreal Engine 5, where this will be covered). Not bad at all, although that is 5% on top of what the game store takes (e.g. Steam’s 18-30%, GOG’s 30%, Sony’s 30%, Microsoft’s 12%). Also, not everyone will want to do business with the mostly Chinese owned Tencent which is the parent of Epic Games.

What alternatives are there? Sadly none, no other engine is close to its level in features.

The Physics Engine

The ideal game physics engine, if one simply wants the most advanced game, is NVIDIA’s GPU accelerated PhysX. This was originally created by Ageia, but NVIDIA bought them out and kept updating PhysX. GPU physics processing can potentially be much faster than CPU implementations, resulting in more potential physics effects in games as well as better performance. PhysX has been open source since December 2018 (though currently only PhysX 4 is open source, not PhysX 5), and has supported DirectCompute for even longer, so there is no acceptable reason not to use it anymore.

PhysX is by far the most advanced, realistic, and capable physics engine for games, although with room for so much more. It can do it all, whether you’re talking about solid object physics, soft body physics, fluids, gases, you name it. And it does it all on a level that is unbelievably realistic. The funny thing is, PhysX has been essentially this advanced for at least eight years. The reason it hasn’t caught on much is because for years it has been limited to running off of NVIDIA GPUs (utilizing NVIDIA CUDA), or CPUs which are too inefficient. CPU physics will always be very limited, and game consoles use AMD video cards so GPU PhysX was out of the question. But this is no longer a limitation of PhysX, so it should be adopted and made mainstream and for the sake of all that is good, it should not run (exclusively) on the CPU.

NVIDIA FleX is based on PhysX technology. Basically a rebrand and it is a smaller library of PhysX.

All credit to the rightful owners of the videos and content below:

Even more impressive demonstrations here!

The most realistic solution to hope for is just for everyone else to catch up, but they never will. No one else seems to try as hard to accurately simulate this many kinds of physics. The best alternatives are Unreal Engine’s Chaos physics, but this doesn’t cover as many areas of physics. Something else must be used for fluid physics simulation in addition.

There are some GPU PhysX games though, and those games have some of the most impressive physics… in one area. These games use PhysX in a very specific way, whether it’s the cloth physics of Mirror’s Edge or procedural chipping away of walls in Mafia 2. The Batman games have impressive small scale use of it, and Cryostasis: Sleep of Reason was once perhaps the best demonstration of a very specialized use of NVIDIA PhysX.

But even PhysX is far from an end game solution to game physics. It is good for simple, widely understood physics, but by default doesn’t do things other software does, such as the level of simulation of electrical physics seen in software used by electrical engineers. Or more precise thermodynamics, but one step at a time. Games need to first stop regressing like they currently are with their technology.

The Graphics API

One of the most significant video game technological advancements in a long time is today’s low level APIs, Vulkan and DirectX 12. We have AMD to thank for these, as both take inspiration from AMD’s Mantle (Vulkan being derivative). Keep this in mind when glorifying NVIDIA’s contributions to video game technology and ignoring AMD’s, since Mantle turned out to be one of the biggest contributions from either company.

DX12 and Vulkan are all about greater parallelism and reduced driver overhead, for potentially huge performance gains as 3DMark’s API overhead tests show. You can also see the performance of DOOM 2016 using its Vulkan renderer, Wolfenstein II: The New Colossus, and best of all DOOM Eternal to understand the performance gains these APIs offer.

They were initially the most performance oriented 3D graphics APIs, and now with DX12 Ultimate DirectX Ray Tracing and Vulkan-RT, they provide the biggest graphics leap in maybe 20 years.

Check out the 3DMark DX12 Ultimate feature showcases: mesh shader can provide a 3x or greater performance boost in geometry-limited scenes, sampler feedback can bring up to a 10% boost while being implemented in technology such as tile-based texture streaming and texture space shading – each of these having their own performance and visual improvements, the latter being particularly helpful for VR gaming according to NVIDIA.

Then there’s variable rate shading which can significantly improve performance for weaker hardware, and is best suited for VR foveated rendering – applying it to the peripheral area where its visual downgrades are less noticeable. DX12 has one advantage over Vulkan right now – DirectStorage, which uses the GPU for decompression, reducing CPU overhead leading to faster load times, faster texture streaming, and other benefits.

Are Voxels the Future?

When people think voxel games, right now they think Minecraft or Teardown – lego graphics, cubes. But just like polygons, more of them can be used to create higher resolution graphics. Are voxels the superior alternative to polygons, with the power of computers constantly increasing?

Voxels can lead not only to amazing quality, but unparalleled physics interactions, especially in destruction and deformation. And they seem relatively lightweight, when you look at the above Unreal Engine voxel terrain video being run on a GTX 1080 Ti, or how Teardown achieves everything it does while being fully ray traced. Here is an interesting article on the subject.

Voxels will also mandate its own physics engine, preferably one that uses OpenCL 2.0 or hipSYCL to offer both good multithreading and GPU acceleration.

If voxels are to take over (and it seems like they should), we need optimization improvements for it too. Something like voxel occlusion culling and mesh/amplification shader but for voxels, preferably with support for dynamic voxel meshes if possible. Though it would also be a good idea to combine both voxels and traditional polygons, using the latter only for static meshes along with mesh/amplification shader.

At the very least, voxels should be explored more immediately. But they do seem like they should be the future. The near future. Throw that into the group of other technologies that seem like they should be the future, such as MicroLED displays, hydrogen fuel cell vehicles, physics based sound propagation, graphene, carbon nanotubes, optical circuits, and so on, but so many of these great technologies don’t get adopted due to investors being invested elsewhere already.

The Audio API

Sound processing has largely gone backwards over the years, with the old DirectSound3D games from 10-15 years ago having more advanced sound processing and effects than any of today’s games, best demonstrated by the first two Thief games but the majority of mainstream PC games from the late 1990s and 2000s demonstrate it to some degree.

OpenAL was the open source successor to DirectSound3D, until Creative Labs bought it out thus putting an end to the “open source” element. These audio APIs allow for advanced 3D HRTF and various dynamic environmental effects—most commonly reverb, but it can do far more than that such as advanced distortion of sound, environmental occlusion, even ring-modulation effects and more.

The 3D HRTF makes a huge difference in spatial sound, enabling binaural audio simulation for stereo users and more precise surround sound as well. Luckily, such technology was not completely ignored; AMD created TrueAudio, their own sound API that is GPU accelerated. TrueAudio Next was born from this. Not only do they have the most advanced 3D sound and improvements upon all the features from OpenAL and EFX, but TrueAudio/TrueAudio Next features a substantial audio evolution with physics-based sound propagation. That’s right, simulating sound waves using physics, completely revolutionizing how audio works in games.

AMD’s TrueAudio led creation of the open source Steam Audio, our choice for the championship of audio APIs. It contains all TrueAudio/TrueAudio Next features, and plugins for both Unreal and Unity engines. So why isn’t anyone using these?

Steam Audio also does not require a sound card to get the best out of it. It is continuously developed, not dead like OpenAL is, and can even support Radeon Rays (which would work on any GPU that supports OpenCL 1.2 and higher, so NVIDIA and Intel are not left out). Who knows what the future holds for Steam Audio? The next step forward would be to use hardware ray tracing (instead of Radeon Rays software ray tracing) to make the sound even more accurate.

But even Steam Audio and TrueAudio Next are not perfect. Steam Audio doesn’t have built-in support for Dolby Atmos or DTS:X, so height speakers cannot be used. There’s no support for a surround sound VR HRTF, for VR gamers who would rather use a surround sound system than headphones, so the ultimate sound solution does not exist for VR. The existing ray tracing solutions for its physics based sound propagation are very limited; only Intel’s Embree ray tracer (which works on any modern CPU) supports dynamic geometry occlusion (e.g., if a door is blocking sound but the door opens, sound will no longer occlude on the door), but this does not extend to destructible/deformable geometry; for example, if sound is propagating down a corridor but the ceiling collapses mid-way through the corridor, the collapsed rubble won’t block sound. Radeon Rays has no support whatsoever for dynamic geometry. This issue would be solved by using Vulkan ray tracing or DirectX ray tracing instead, but Radeon Rays should also improve since dynamic geometry is possible with software ray tracing.

We wrote an article about Steam Audio here.

To find out what audio API games are using, check out the link below although it isn’t complete.

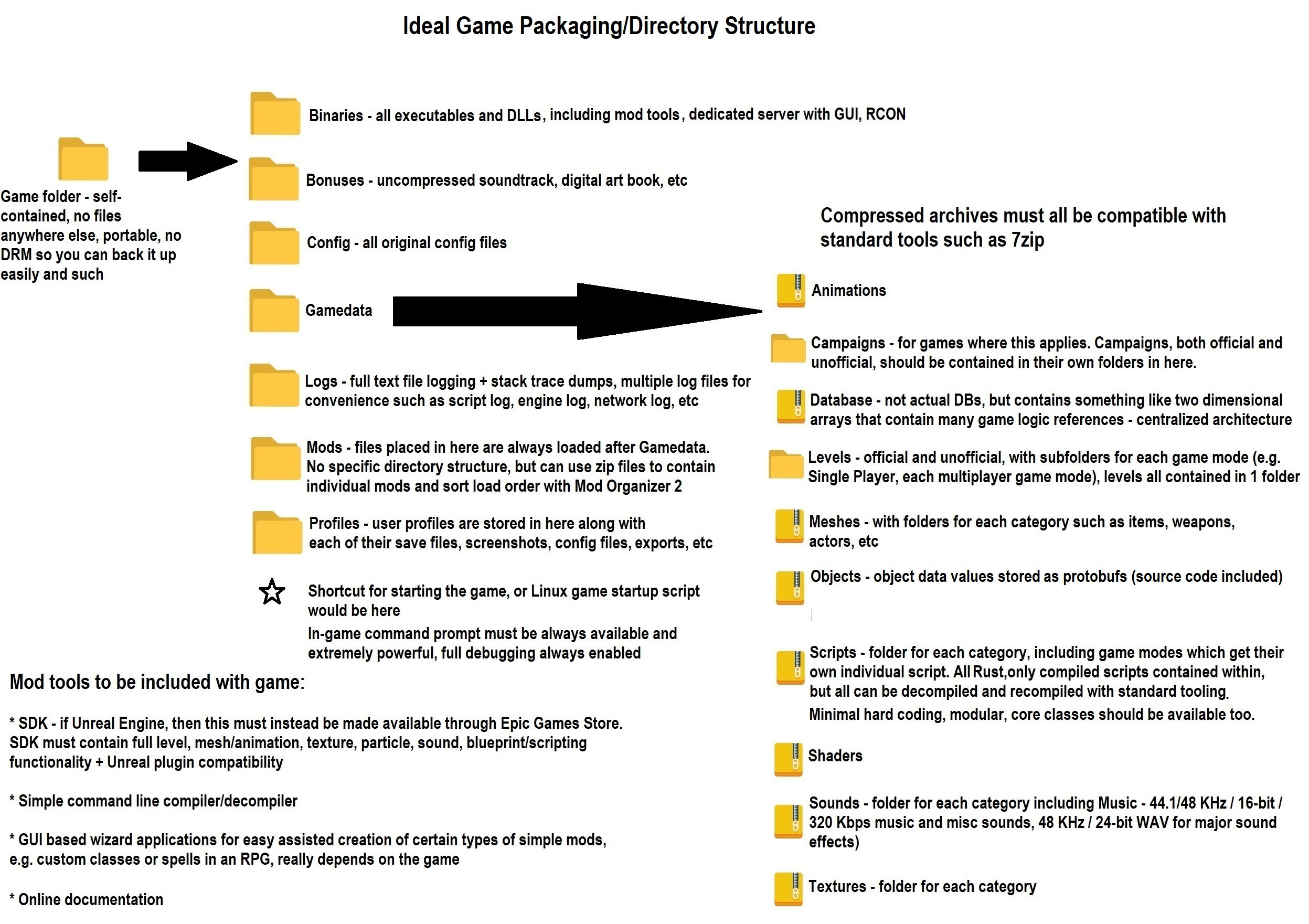

Ideal Game Directory Structure, Mod Loading, Graphics/Audio Options and Settings

This section is mostly for engine developers. Here is how I propose a game project be architected, shown above. Neat and organized, which many engines lack, self-contained and portable so that you can easily back up games or run several copies at once, something critical for moddable games. I’ve adopted Unreal Engine’s config file redundancy (master and per user profile), the Gamedata directory here is self-explanatory and straightforward unlike so many engines, and all game engines should abolish proprietary compression formats that need proprietary tools to be worked with. After all, modding only increases sales if anything.

Then there’s the mod loading. A self-contained mods folder is a must, as is being able to sort mod load order or easily enable/disable mods, preferably without just deleting folders from the Mods directory. Hence why I propose it functioning similarly to Aurora engine’s “Override” folder in that it doesn’t need structure – the game should load all its original files, and then search the Mods folder for files to overwrite with. But it should be possible to use Mod Organizer 2 to organize it.

And for any game with custom campaigns, that should have its own directory as illustrated above under Gamedata. After all, why overcomplicate things?

While we’re discussing things of this nature, let’s also mention the inclusion of a command prompt, also known as a console. Every game should provide the full fledged command prompt, if anything just lock it behind another console command or behind a startup parameter. Your games are buggy, a good command prompt can help us get past that or just tinker with other things to our liking. It should have full control over most of the game such as spawning/despawning, quests, network connectivity for multiplayer, a proper search function, scripting capability (especially for Lua games since no compilation is needed) and the ability to execute scripts from only a certain directory, etc. Think Fallout 4’s and Skyrim SE’s console but even more powerful.

With all this cutting edge technology, some may wonder about graphics settings and how scalable such a game would be. The truth is, the latest and greatest technology is more scalable and more usable on lower end hardware than ever before. Optimization was top priority for both Vulkan and DirectX 12 after all. Using all of this technology, here are ideal graphics and sound settings that I’ve come up with.

Yes, DX12 Ultimate and equivalent Vulkan, along with real time ray tracing and a mesh shader geometry rendering pipeline (Amplification shader -> Mesh shaders -> Pixel shaders and any Compute shaders, no other shader types) should be the minimum. Why? Because consoles support it and console spec should be the minimum. Even phones support it. Modern renderers shouldn’t even support dynamic lighting without ray tracing nor ambient occlusion.

Minimum system requirements for modern games should be as follows:

- Windows 11 or Linux

- AMD Ryzen 5 3600 / Intel Core i5 10400

- 16GB RAM

- AMD Radeon RX 6600 / NVIDIA GeForce RTX 2060 SUPER

This is several years old by now and actually beneath PS5 / XBOX specification. Consoles should be the baseline for minimum requirements of multiplatform games. PC exclusives are free to raise the minimum requirements.

If for some reason you want to add motion blur (unnecessary since over 99% of gamers are using sample and hold displays that add infinitely more motion blur than we perceive in reality) or chromatic aberration (should always be present only where it makes sense, like through distorted glass or water) then make them separate options. But do consider these arguments against it.

Conclusion

The unfortunate conclusion is that most of this tech won’t be put to much good use. Or all of it even. Games will be released on Unreal Engine 5, some will use groundbreaking new technology like Quixel Megascans and ray-tracing, along with the other features mentioned above like Metahuman and AutoLandscape. None will use Chaos Destruction, just like how GPU PhysX died a long time ago as did physics in general outside of VR games. This tech will be used… to make typical inferior modern games, many of which will just be wannabe movies that sacrifice gameplay and narrative design in favor of “cinematics” while simultaneously not being good movies.

At the end of the day, games made in 1998 by 20 dudes carving onto stone tablets, as shown below, will reign supreme over modern games made by 100+ people using modern technology.

Ultimately, game development today can be split up into three categories:

- “We’re an AAA studio, let’s figure out how to spit out the highest grossing product in the shortest amount of time. Found a successful formula? Stick with it. We develop in cycles, no time for major new features that won’t sell more anyway!”

- “Alright guys, we aren’t very skilled or capable but we see that a lot of nostalgic gamers like old games and old graphics. Let’s just copy that – make old games with old technology, the actual quality of the game is irrelevant. If it appeals to nostalgia, it will sell!”

- “The game industry is full of the game creators described above, and we’re sick of them! We want to make our own vision a reality, however because we position the art above all, we have no investors, no money, barely any employees, and not much actual development experience or skill. The end result will end up being disappointing and probably broken since we didn’t have the resources to make our complete dream a reality.”

We need the tech, and we need the games to use it well, like PC games did in the 2000s. As it stands, it’s absolutely embarrassing that some of the best game technology showcases come from very old games, such as the AI of Unreal and Unreal Tournament or even F.E.A.R. and S.T.A.L.K.E.R.’s A-Life (especially when you look at mods), or the physics of the Red Faction games, Half-Life 2, Frictional Games titles, F.E.A.R. again, Dark Messiah: Of Might and Magic, and Crysis. Even classic 1990s and early 2000s PC titles were often more physics enabled than today’s static games. There has been some physics evolution in smaller production modern games though (namely Exanima), and VR is really pushing the physics envelope, best demonstrated by Blade & Sorcery and Half-Life: Alyx.

NVIDIA’s GPU accelerated PhysX, now open source, has so much potential to take gaming to the next level. Games will no longer be so static, we need more of everything to be physics based. This doesn’t just mean destructible everything (but that’s a start), but it means more lifelike worlds. Physics aids stealth in games like Thief and SOMA, it allows for immersive gaming in general, it is used famously for problem solving challenges in Half-Life 2, it is used to greatly bolster the amount of violence and carnage you can orchestrate in F.E.A.R. and Dark Messiah: Of Might and Magic, and it has so much more potential to transform games in ways we haven’t seen before… but it needs to be optimized, and the tech is there for it.

PhysX is never used to its full potential and GPU physics in general still aren’t used for some mind boggling reason. Then there’s sound – the first two Thief games demonstrate how important sound can be in gameplay, and no game I know of has attempted to demonstrate it since. Once again, the technology is here, thanks to Steam Audio and AMD TrueAudio Next. We will do a separate article on the importance of sound.

Of course, there are other beneficial technologies that the industry is not pushing for strongly enough. How about better surround standards? We mentioned Dolby Atmos and DTS:X earlier; having these as standard would be ideal. As far as graphics technology goes, we’re getting there in AAA gaming thanks to HDR and ray tracing. One area that’s really lacking in modern gaming is in AI, as there hasn’t even been a push to evolve it, and the best AI showcases are mostly in older games such as F.E.A.R. and S.T.A.L.K.E.R.

The fact that the industry doesn’t push for any of these technologies, except for the graphics APIs discussed (but even then, DX12 more than Vulkan which is a mistake), shows how misguided and flawed the industry (as well as NVIDIA) is. Just like with the games themselves where the goal is usually not to make an improved product, rather it’s typically to maximize profits and minimize expenses, made especially amusing when one considers how many big name publishers make such incorrect assumptions about what the consumers really want.

Another thing we have noticed is the amount of gamers who haven’t noticed this technological revolution that has happened, with technologies such as Unreal Engine 5 and all its plugins, DirectCompute PhysX and the untapped theoretical potential of an OpenCL PhysX, Steam Audio and TrueAudio Next.

When discussing technologically innovative game concepts, so many gamers will state that they’re not possible to run, but the videos above demonstrate otherwise. Performance is not a limitation, all this tech can run at high frame rates and high resolutions, on modern hardware, and it can be very scalable. The optimization technology is here. AAA game studios enjoy your ignorance though, so that they can keep pushing out games with 1990s console game design rather than putting in the work to evolve game design using modern technology.

Also, gamers tend to not realize how much all of this technology can benefit most kinds of games, not just select few. RPGs, especially cRPGs, are one of the most technologically obsolete and regressed genres in the industry today, and they can benefit from all this tech at least as much as any shooter can.

When it comes to evolving game technology, gamers fall into one or two categories:

- “We can’t run it, performance will be too poor so I don’t want it.”

- “That tech will be cool… in very few kinds of games. I lack the imagination to see how it will evolve most games.”

Don’t forget that most or all of your favorite classic games used cutting edge technology to improve the game in many ways, including gameplay. That’s one of the things that make them classics.

Game studios see this which further contributes to why game technology has stagnated and will continue to lack innovation. Both points are false too – all this tech can immensely improve most types of games, and performance isn’t a concern if it is implemented the right way, and the right way is often the easy way at least with Unreal Engine due to its extensive plugin and feature selection.

When 2000s games such as Half-Life 2 (2004), F.E.A.R. (2005), Dark Messiah: Of Might and Magic (2006), Crysis (2007), Cryostasis: Sleep of Reason (2009), Wolfenstein (2009), and believe it or not many others outclass most of today’s games in the same genres (especially AAA games) in some of the most important, gameplay-affecting technological areas such as physics, particle effects, AI, dynamic lighting and shadows, you know something is wrong with the gaming industry. Not to mention how almost every 2000s PC game is years more advanced than any of today’s games in sound processing. Don’t be mistaken, this is the current state of the industry. Technology hasn’t gone backwards, but the games have.

It would be really beneficial if Microsoft created and maintained their own game engine or Unreal Engine 5 DX12 Ultimate renderer that brilliantly showcases every single DX12 Ultimate feature. Microsoft should also create a new version of DirectSound that leverages DirectX ray tracing for fully path traced audio.

And it’d also be a dream come true if NVIDIA Lightspeed Studios created or maintained their own game engine or Unreal Engine 5 branch that’s more than just their NvRTX branch – they should have a branch or engine that showcases all of their game tech, not just the RTX brand of technology. PhysX 5, GPU accelerated AI, everything RTX and DLSS and Reflex of course, mesh shader virtual geometry pipeline, micro-meshes, SER, RTXIO.

Lastly, Futuremark does a good job constantly showcasing the latest DirectX features in 3DMark – it’d be awesome of they made that into a full game engine like Unigine did. Sure, no one used Unigine to make a game, but a Futuremark game engine could have more appeal. This level of mainstream innovation from all of the above companies would help get this amazing technology into games.

[…] of universal requests, how about using all of the technology discussed in this article? There aren’t really any good reasons not to use either Vulkan or DX12 (emphasis on Vulkan) as […]